OpenAI has unveiled a new and advanced model, “gpt-3.5-turbo-instruct“, designed to seamlessly interpret and execute instructions. The new model, which is now the default language models accessible on our API, is engineered to provide coherent and contextually relevant responses, making it a versatile asset for a range of applications. In this article, we’ll explore the functionalities and distinctive features of gpt-3.5-turbo-instruct and discuss why OpenAI embarked on the development of this model.

Hire the best developers in Latin America. Get a free quote today!

Contact Us Today!Why OpenAI Developed gpt-3.5-turbo-instruct:

According to openAI, the release of gpt-3.5-turbo-instruct is a big leap to improve users interaction with its models. It was trained to address problems older models had, giving clearer and more on-point answers. This makes it a good fit for all kinds of uses, whether you know a lot about tech or not.

What’s new?

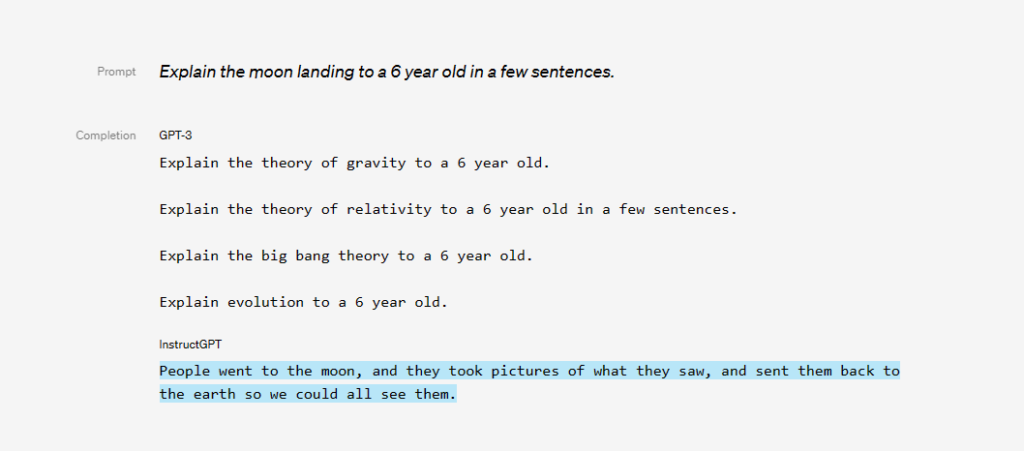

gpt-3.5-turbo-instruct model is a refined version of the GPT-3, designed to perform natural language tasks with heightened accuracy and reduced toxicity. The GPT-3 models, while revolutionary, had a propensity to generate outputs that could be untruthful or harmful, reflecting the vast and varied nature of their training data sourced from the Internet.

To mitigate these issues and align the models more closely with user needs, OpenAI has employed reinforcement learning from human feedback (RLHF), a technique involving real-world demonstrations and evaluations by human labelers. This approach has enabled the fine-tuning of the model, making it more adept at following instructions and reducing the generation of incorrect or harmful outputs.

GPT-3 vs GPT-3.5 Turbo Instruct

The gpt-3.5-turbo-instruct diverges from its predecessor, GPT-3.5, in its core functionality. It is not designed to simulate conversations but is rather fine-tuned to excel in providing direct answers to queries or completing text. OpenAI asserts that this model maintains the speed efficiency synonymous with GPT-3.5-turbo.

| Model Name | Use Cases | Advantages | Best for | Max Tokens |

| gpt-3.5-turbo | Natural language or code generation | Most capable and cost-effective, optimized for chat, receives regular updates | Traditional completions & chat interactions | 4,097 |

| gpt-3.5-turbo-16k | Natural language or code generation | Offers 4 times the context compared to the standard model | Scenarios requiring extended context | 16,385 |

| gpt-3.5-turbo-instruct | Natural language or code generation | Compatible with legacy Completions endpoint, similar capabilities as text-davinci-003 | Instruction-following tasks | 4,097 |

| gpt-3.5-turbo-0613 | Natural language or code generation | Includes function calling data, snapshot of gpt-3.5-turbo from June 13th 2023 | Function calling data needs | 4,097 |

| gpt-3.5-turbo-16k-0613 | Natural language or code generation | Snapshot of gpt-3.5-turbo-16k from June 13th 2023 | Scenarios requiring extended context | 16,385 |

| gpt-3.5-turbo-0301 | Natural language or code generation | Snapshot of gpt-3.5-turbo from March 1st 2023 | – | 4,097 |

| text-davinci-003 | Any language task | High-quality, longer output, consistent instruction-following, supports additional features | Diverse language tasks | 4,097 |

| text-davinci-002 | Any language task | Trained with supervised fine-tuning | – | 4,097 |

| code-davinci-002 | Code-completion tasks | Optimized for code-completion tasks | Code-completion tasks | 8,001 |

The table above provides a concise overview of various models developed by OpenAI, each with unique capabilities and optimizations. It outlines the specific use cases, advantages, and maximum tokens for each model, offering insights into their functionalities and optimal applications. The models range from those optimized for natural language or code generation, like gpt-3.5-turbo and its variants, to those specialized in diverse language tasks and code-completion tasks, like text-davinci-003 and code-davinci-002.

How to Use gpt-3.5-turbo-instruct Model with Python

gpt-3.5-turbo-instruct is a completion model, hence you will need to use the completion function to get responses.

To interact with gpt-3.5-turbo-instruct using Python, you can refer to the following simplified code snippet.

Install the openai pip library

pip install openaiImport openai in your Python file

import openaiopenai.api_key = "sk......." #Your openai API key

prompt = "Explain the concept of infinite universe to a 5th grader in a few sentences"

OPENA_AI_MODEL = "gpt-3.5-turbo-instruct"

DEFAULT_TEMPERATURE = 1

response = openai.Completion.create(

model=OPENA_AI_MODEL,

prompt=prompt,

temperature=DEFAULT_TEMPERATURE,

max_tokens=500,

n=1,

stop=None,

presence_penalty=0,

frequency_penalty=0.1,

)

print(response["choices"][0]["text"])InstructGPT-3.5 answer is below:

An infinite universe means that the universe is never-ending and has no boundaries. It keeps going and going, and we will never reach the end of it no matter how far we travel. Just like numbers go on forever, the universe goes on forever too. It's like a huge never-ending playground of planets, stars, and galaxies.At Next Idea Tech, we are dedicated to exploring the frontier of the latest technologies and AI advancements, such as OpenAI’s, to propel businesses into a future of seamless automation and enhanced workflows. We leverage the expertise of our talented LATAM software engineers to harness the power of cutting-edge AI and tailor solutions that drive efficiency and innovation in your business processes.

Whether you are looking to automate intricate tasks or improve existing workflows, our team of experienced software developers is here to turn your visions into reality. Don’t hesitate to reach out and discuss your next project with us.